📌 What is Computing Bias?

Bias: An inclination or prejudice in favor of or against a person or a group of people, typically in a way that is unfair.

Computing bias occurs when computer programs, algorithms, or systems produce results that unfairly favor or disadvantage certain groups. This bias can result from biased data, flawed design, or unintended consequences of programming.

🎥 Example: Netflix Recommendation Bias

Netflix provides content recommendations to users through algorithms. However, these algorithms can introduce bias in several ways:

🔍 How Bias Can Occur:

- Majority Preference Bias:

- Recommending mostly popular content, making it hard for less popular or niche content to be discovered.

- Filtering Bias:

- Filtering out content that doesn’t fit a user’s perceived interests based on limited viewing history.

- For example, if a user primarily watches romantic comedies, Netflix may avoid suggesting documentaries or foreign films, even if the user would enjoy them.

🧐 How Does Computing Bias Happen?

Computing bias can occur for various reasons, including:

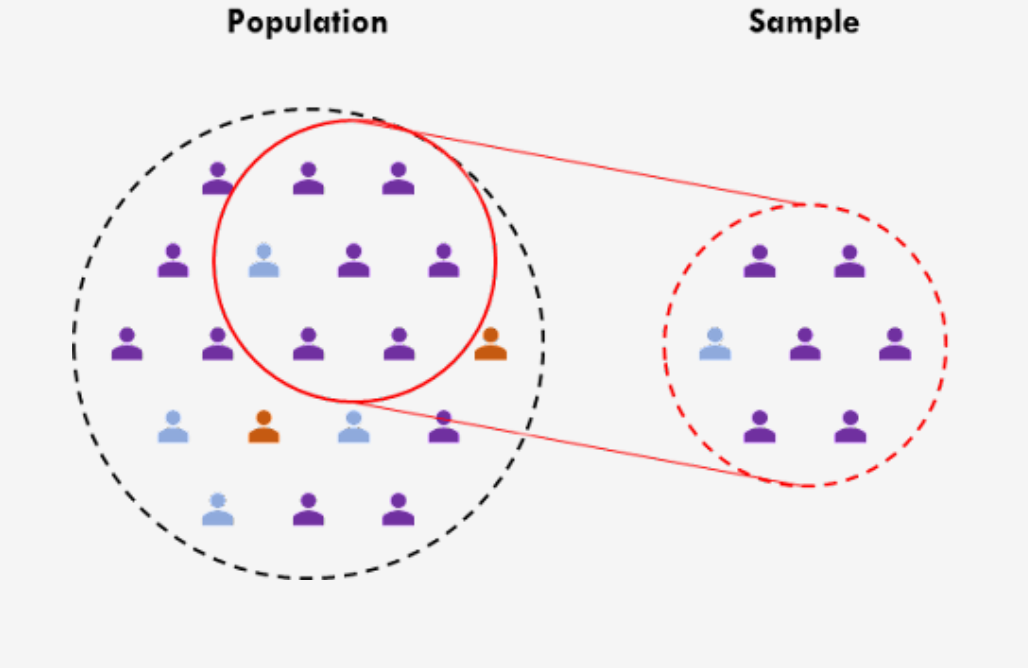

📂 1. Unrepresentative or Incomplete Data:

- Algorithms trained on data that doesn’t represent real-world diversity will produce biased results.

📉 2. Flawed or Biased Data:

- Historical or existing prejudices reflected in the training data can lead to biased outputs.

📝 3. Data Collection & Labeling:

- Human annotators may introduce biases due to different cultural or personal biases during the data labeling process.

📊 Explicit Data vs. Implicit Data

📝 Explicit Data

Definition: Data that the user or programmer directly provides.

- Example: On Netflix, users input personal information such as name, age, and preferences. They can also rate shows or movies.

🔍 Implicit Data

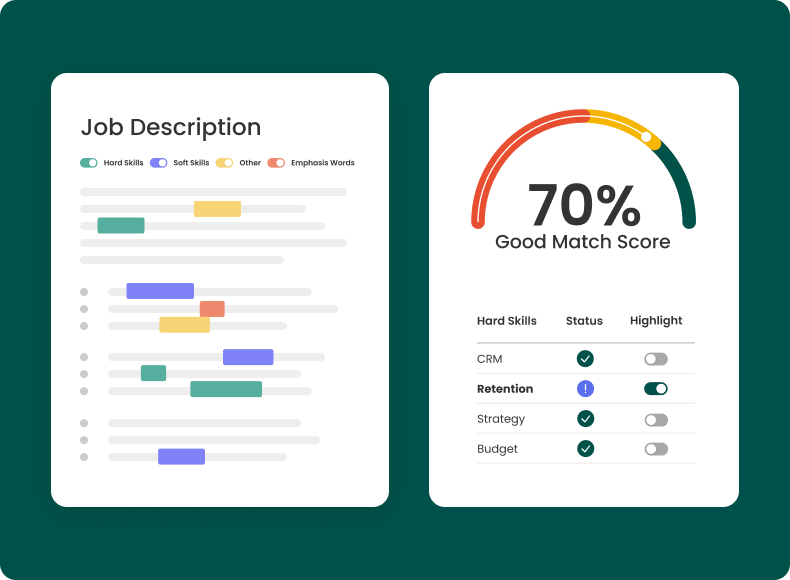

Definition: Data that is inferred from the user’s actions or behavior, not directly provided.

- Example: Netflix tracks your viewing history, watch time, and interactions with content. This data is then used to recommend shows and movies that Netflix thinks you might like.

⚖️ Implications

- Implicit Data can lead to reinforcing bias by suggesting content based on past behavior, potentially limiting diversity and preventing users from discovering new genres.

- Explicit Data is generally more accurate but can still be biased if user input is limited or influenced by the design of the platform.

🤔 Popcorn Hack #1

What is an example of Explicit Data?

A) Netflix recommends shows based on your viewing history.

B) You provide your name, age, and preferences when creating a Netflix account.

C) Netflix tracks the time you spend watching certain genres.

📝 Types of Bias

🤖 Algorithmic Bias

- Algorithmic bias is bias generated from a repeatable but faulty computer system that produces inaccurate results.

- Example: A hiring algorithm at Amazon is trained on past employee data but the data shows that male candidates were hired more often than female candidates. Because of this, the system favored male candidates over female candidates because historical hiring practices were biased toward men.

📈 Data Bias

- Data bias occurs when the data itself includes bias caused by incomplete or erroneous information.

- Example: A healthcare AI model predicts lower disease risk for certain populations. Since the AI model hasn’t been introduced to other demographics, it would assume that data should include patients from a specific demographic, and not consider others.

🧠 Cognitive Bias

- Cognitive bias is when the person unintentionally introduces their own bias in the data.

- Example: A researcher conducting a study on social media usage unconsciously selects data that supports their belief that too much screen time leads to lower grades. This is a form of cognitive bias called confirmation bias because the researcher is searching for information to support their beliefs.

🤔 Popcorn Hack #2

What is an example of Data Bias?

A) A hiring algorithm favors male candidates because the training data contains a disproportionate number of male resumes.

B) A system is trained on a dataset where certain groups, such as people with darker skin tones, are underrepresented.

C) A researcher intentionally selects data that supports their own beliefs about the impact of screen time on grades.

Intentional Bias vs Unintentional Bias

Intentional Bias: The deliberate introduction of prejudice or unfairness into algorithms or systems, often by individuals or organizations, to achieve a specific outcome or advantage.

Example: A hiring algorithm designed to favor candidates from certain backgrounds by prioritizing certain keywords in their resumes associated with only certain groups.

- Goal of the algorithm: Select the most qualified candidates based on their resumes and experience.

- However, the people who create this algorithm might intentionally include factors that are biased toward certain groups.

For example, if the algorithm is designed to prioritize resumes with certain words or experiences that are more common among a specific gender or ethnic group, it might unfairly favor candidates from that group over others.

Also, if the algorithm gives extra weight to leadership positions in high-profile companies that are predominantly male or white, it may unintentionally (but intentionally by the developers) disadvantage women or people of color who have the same qualifications but worked in different environments.

Unintentional Bias: Occurs when algorithms, often trained on flawed or incomplete data, produce results that unfairly discriminate against certain groups.

Example: A facial recognition software.

- Goal of the program: Designed to identify people based on their facial features.

- However, if the software is trained using a large dataset of photos primarily of one race, it can have trouble identifying individuals who look different.

Let’s see a real-life example of this!

For example, if the software is trained using pictures of people but the majority of those photos are of lighter-skinned individuals, the system may have trouble accurately recognizing people with darker skin tones.

This unintentional bias happens because the developers didn’t purposefully choose to exclude people with darker skin, but because the dataset they used happened to be unbalanced.

As a result, the system works better for lighter-skinned people and struggles with darker-skinned people, even though the goal is to treat everyone equally.

🤔 Popcorn Hack #3

What is an example of Unintentional Bias?

One person describes a biased scenario. Classmates guess whether it’s intentional or unintentional.

You’ll get cookies! 🍪🍪

🌟 Mitigation Strategies

Mitigation strategies aim to prevent computing bias by gathering and using more diverse and representative data throughout the algorithm’s lifecycle.

🔍 1. Pre-processing Phase (Model Planning & Preparation)

- Purpose: Identify and fix issues in data collection to ensure accurate model training.

- Actions:

- Managing missing data.

- Ensuring data diversity.

- Selecting relevant variables.

- ✅ Outcome: Prevents biased data from being used to train the model.

🧩 2. In-processing Phase (Algorithm Development & Validation)

- Purpose: Address biases during training and validation of AI algorithms.

- Actions:

- Inserting synthetic samples representing minority cases.

- Using cross-validation strategies.

- ✅ Outcome: Promotes equal representation across demographics.

🚀 3. Post-processing Phase (Deployment & Usage)

- Purpose: Implement the model and ensure fair application in real-world settings.

- Actions:

- Monitoring model performance in deployment.

- Adjusting outputs to reduce bias.

- ✅ Outcome: Ensures the model functions fairly for all user groups.

- Pre-processing Phase (Model Planning and Preparation)

- Looking for and fixing any problems in the data collection process to ensure that the data is accurate for model training (management of missing data, ensure data diversity, select relevant variables, etc.).

- Prevents biased data from being used to train the model

- In-processing Phase (Algorithm Development & Validation)

- In-processing represents all activities surrounding the training and validation phase of an AI algorithm (inserting synthetic sampls that are representative of minority class cases, using cross-validation strategies, etc.)

- Identifies biases or other vulnerabilities in the model and promote equal representation for all demographics

- Post-processing Phase (Clinical Deployment)

- This phase encompasses a model’s implementation after it’s been deployed and used in a live environment by others.

- It collects clinical data and interprets it to assess ensure compliance and refine future applications

📚 Computing Bias - Homework Questions

✍️ Short-Answer Question

Explain the difference between implicit and explicit data. Provide an example of each.

💯 Scoring Rubric:

| Criteria | Description | Points |

|---|---|---|

| Multiple-Choice Questions (0.7 points total) | Each correct answer is worth 0.1 points. | |

| Question 1 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 2 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 3 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 4 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 5 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 6 | Award 0.1 point if the correct option is selected. | 0.1 |

| Question 7 | Award 0.1 point if the correct option is selected. | 0.1 |

| Short-Answer Question (0.3 points total) | Explanation of implicit vs. explicit data, with accurate examples. | 0.3 |

| Clarity & Accuracy | Clear, concise, and correct explanation of implicit vs. explicit data. | 0.15 |

| Examples Provided | Provides appropriate examples for both implicit and explicit data. | 0.15 |

| Total | 1.0 |